Lately for my business, I’ve been recommending the QNAP TS-831x to clients. As I have a few of them sitting around my lab now, I decided to abuse them a little.

In the world of SOHO NAS devices, most people would solidly recommend Synology. Arguably, the software is second to none, but then again so is the price tag. And after a very bad experience with a DS2015xs, I decided to give QNAP a try.

Since then, I’ve been recommending the TS-831x to clients. For the price, the performance is stellar and it’s a steal at $799. It has dual-SFP+ ports, software that is practically indistinguishable from Synology, and eight 6Gbps SATA ports, which is something that the equivalent Synology didn’t have until this year’s hardware update. It does have a pretty lackluster ARM CPU in it, so I definitely wouldn’t recommend it for people that like to mix their compute and their storage.

As I have a few of these devices now as spares and backup, I decided it was time to explore the possibilities of them.

Virtual JBOD

I’ve started using ZFS again as my primary storage, and I would love to be able to somehow use one of these TS-831x as part of a ZFS pool for a receive target as a fourth tier backup.

A capability that the TS-831x advertises is “Virtual JBOD”. Digging into that concept, the idea is to export iSCSI LUNs from other devices and use them as part of the storage pool on the QNAP.

On the QNAP, with the basic RAID that it uses, that’s reasonably safe. Of course, with ZFS, that’s not what I want.

I wanted it the opposite direction.

The players:

- A QNAP TS-831x

- 5x6TB Western Digital Red Drives (the 5400 RPM non-pro version)

- Ubuntu 17.04 VM on ESXi

- End-to-end 10Gb

DO NOT EVER DO THIS

When you are talking about ZFS, there is a hard and fast rule. Give ZFS direct access to the disks. Many of the horror stories you hear about people losing data starts with something like “I have a RAID card and not an HBA, so I created single RAID stripes out of the disks, etc”.

Needless to say, doing something like this is probably not recommended:

For the uninitiated, I’ve taken five 6TB drives in a TS-831x, created separate storage pools out of them, and exported each pool as an iSCSI target.

Error

I’m not entirely sure this is the best way to set this up, but it was the only way I could make it work. Of course the QNAP is screaming the entire way. It wants to be a complete storage+media device, not just a slave for some drives.

ZFS and Ubuntu and QNAP

The goal of all of this was to be able to take periodic ZFS snapshots of a live pool, send them to the QNAP. Of course unless you are using Enterprise-level QNAP devices, ZFS isn’t supported.

After many years of FreeNAS, I’ve started using Ubuntu for all my ZFS needs.

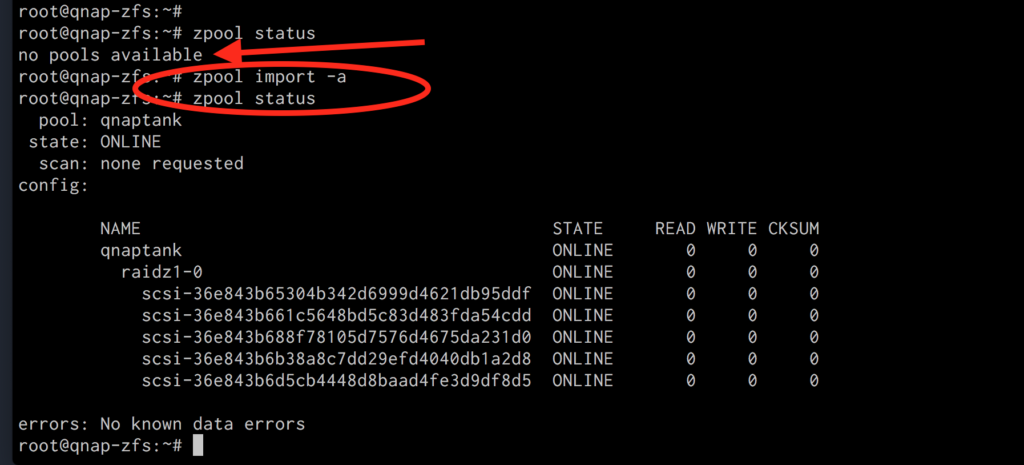

iSCSI is simple to set up now and well-covered on one of their guides. After the normal ZFS-creation stuff, I had a RAIDz1 pool of my QNAP drives.

It’s important to note that on every reboot, I had to manually import the pool. I’m sure it’s just a simple dependency loading-order issue, ZFS is loading before iSCSI. For this testing and backup, I didn’t bother to fix it.

Send and Receive

Once I had a working ZFS pool running on the TS-831x via an Ubuntu VM, it was time to get to work. On the original zpool:

zpool get allocated

NAME PROPERTY VALUE SOURCE

tank allocated 12.2T -12.2T of data should be enough to throughly break it in.

I assumed that if the setup was going to break, a ZFS send/receive would be a good way to cause it to implode.

zfs send -R [email protected]| ssh [email protected] zfs recv qnaptank/tankbackup

While loading, it showed decent performance:

Crash and Burn

Well it didn’t actually crash or burn to my surprise.

My custom ZFS snapshot send/receive management script had a bug in it. So I ended up sending the 12.2T of data twice because my script trashed the first copy. That means my stress test was inadvertantly doubled.

Anyway, exactly 24 hours and 13 minutes later (after starting over), I had a copy of 12.2T of data on the ZFS/QNAP setup. That’s a solid 160MB/s to a RAIDz1 over SSH and iSCSI. There were no drive or other checksum errors, and some random verification of the data showed it was fully intact.

And even subsequent sends/receives of updated snapshots completed without issue, though those are usually slower than the initial:

Migrating a VM to the new “QNAP ZFS” array, it showed decent performance when running disk tests inside of the VM:

For RAIDz1 on slow drives, over an iSCSI connection, not bad at all.

Conclusion

It goes without saying that this is probably not a setup someone should actually run. At some point I’m imagining the pool is going to explode. For now, it’s fine as a 4th level of backup.

If nothing else, it’s analogous to setting up individual RAID0 on 5 separate drives (which is a huge NO-NO), and then exporting it over a network connection (also probably a huge NO-NO).

I’m currently gathering and writing up some benchmarks on one of these TS-831x devices, just using them as intended. The result of these will be posted later, but I will say, these are fully capable devices for any basic home or small business setup.